Microsoft Windows has always been the go-to operating system for gamers. Whenever ever we say “gaming PC”, we usually picture a Windows machine. This is because Windows supports different hardware (CPUs and Graphics Cards) and also has well-designed and industry-standard APIs for game development. Microsoft also continually introduces new features in computer hardware and software optimization to enhance user experiences and system performance. One such innovation that has garnered attention from gamers and enthusiasts alike is “Hardware Accelerated GPU Scheduling.”

With the rise of gaming as a mainstream activity and the demands of resource-intensive applications, the efficient allocation of system resources has become paramount. This feature, introduced by Microsoft, aims to optimize the interplay between the CPU and GPU, offering potential benefits in responsiveness, latency reduction, and overall performance. But what is this Hardware-Accelerated GPU Scheduling? How to enable it? How will it affect the performance? Let us try to find answers to all these in this guide.

Outline

Toggle- What is Scheduling?

- Understanding GPU Scheduling

- Challenges in Traditional Software-Based Scheduling

- Need for Hardware Acceleration

- What is Hardware-Accelerated GPU Scheduling?

- Requirements for Hardware-Accelerated GPU Scheduling

- How to Enable Hardware-Accelerated GPU Scheduling?

- Should you Enable Hardware Accelerated GPU Scheduling?

- Conclusion

- FAQs

What is Scheduling?

Scheduling, in the context of computers and operating systems, refers to the process of determining the order and timing at which various tasks or processes are executed on a computer’s central processing unit (CPU) or other system resources. It plays a critical role in various computing systems, ranging from single-core CPUs to multi-core processors, GPUs, and distributed computing environments. The primary goal of scheduling is to optimize the utilization of system resources, enhance efficiency, and provide a responsive and fair resource allocation.

In a multitasking operating system environment, multiple processes or tasks compete for the limited resources available, such as CPU time, memory, and I/O operations. Scheduling algorithms play a crucial role in deciding which process to execute next and for how long, based on certain criteria.

CPU Scheduling involves selecting the next process from the queue of ready-to-execute processes to run on the CPU. The goal is to maximize CPU utilization, throughput, and responsiveness while minimizing waiting times and turnaround times.

- Preemptive Scheduling: The operating system can interrupt a running process and switch to another process based on priority or time quantum. Examples include Round Robin and Priority Scheduling.

- Non-preemptive Scheduling: A process holds the CPU until it voluntarily releases it. Examples include First-Come-First-Served (FCFS) and Shortest Job Next (SJN) Scheduling.

I/O Scheduling involves managing the order in which I/O requests are serviced. The objective is to minimize the time processes spend waiting for I/O operations.

Understanding GPU Scheduling

GPU (Graphics Processing Unit) scheduling is a critical aspect of managing the execution of tasks on modern GPUs. In a typical graphics pipeline, multiple tasks, such as rendering, shading, and compute operations, are submitted by applications or the operating system to be processed by the GPU. The GPU execution pipeline consists of multiple stages that process tasks in parallel to achieve high throughput and performance.

This pipeline includes stages like vertex processing, geometry shading, rasterization, pixel shading, and memory access. Each stage involves specific tasks, such as transforming vertices, shading pixels, and managing memory operations. The GPU scheduler’s role is to efficiently manage the distribution of these tasks to the available processing units within the pipeline.

The execution units of a GPU, such as CUDA cores or shader units, operate in parallel, allowing multiple tasks to be processed simultaneously. Efficient scheduling ensures that these execution units are fully utilized and that data dependencies between tasks are managed to avoid conflicts. It also achieves optimal performance, low latency, and resource utilization.

GPU Scheduling in Windows

Coming to the GPU side, Windows developed WDDM or Windows Display Driver Model, which is a graphic driver architecture for graphics cards. It supported virtual memory, scheduling, sharing of Direct3D surfaces, etc.

Out of all these features, the WDDM GPU Scheduler is very important as it changed the traditional “FIFO” style queue with priority-based scheduling. Even with newer versions of WDDM, the scheduling algorithm was more or less the same i.e., a high-priority thread has the CPU time.

This approach has some fundamental limitations. For example, an application thread to work on GPU at time ‘x’ must have the CPU prepare the commands for GPU at the time ‘x-1’. So, when the GPU is working on frame ‘n’, the CPU started work commands for frame ‘n+1’.

Essentially, this buffering of GPU commands by CPU might minimize the scheduling overload but will have a significant impact on latency. The user input picked up by the CPU will not be processed by the GPU until the next frame.

Challenges in Traditional Software-Based Scheduling

Schedulers have been traditionally a part of the Operating System i.e.; they are essentially a piece of software. Even though hardware schedulers are available (in the form of programmable FPGAs or ASICs), they are confined mainly to hard real-time systems to support one scheduling algorithm.

In traditional software-based scheduling, the operating system’s kernel manages the task queues and switches between tasks using software interrupts. This involves interactions between the CPU and GPU, leading to potential delays and overhead. Context switches, where the GPU switches from executing one task to another, can introduce latency and reduce overall system performance.

Traditional software-based GPU scheduling has limitations that can hinder performance and responsiveness.

- Software-based context switches involve communication between the CPU and GPU, leading to delays and overhead. This overhead becomes problematic when rapid task switching is required.

- As the number of CPU cores and GPU execution units increases, the complexity of managing and coordinating tasks also rises. Traditional software-based scheduling might struggle to efficiently handle this growing complexity.

- Preemption, which involves pausing one task to execute another, is challenging to implement efficiently in software. Coordinating preemption and task resumption can lead to inefficiencies.

- Inefficient memory management and allocation can lead to resource fragmentation, where the GPU’s memory is not optimally utilized. This can result in suboptimal performance and even out-of-memory errors.

- Traditional scheduling methods might lack fine-grained control over fairness, potentially leading to some tasks monopolizing resources while others are starved.

In response to these challenges, hardware-accelerated GPU scheduling offers a promising solution by offloading scheduling tasks to dedicated hardware components on the GPU, addressing these limitations and improving overall performance and efficiency.

Need for Hardware Acceleration

The need for hardware-accelerated GPU scheduling arises from the increasing demand for high-performance graphics and compute workloads. As applications become more complex and workloads diversify, traditional software-based scheduling mechanisms can struggle to effectively manage these demands. Several factors contribute to the need for hardware acceleration.

- Modern applications utilize a mix of graphics rendering, AI computations, physics simulations, and more. Coordinating these diverse workloads efficiently requires a more streamlined and optimized scheduling process.

- GPUs are designed for parallel processing, capable of executing numerous tasks simultaneously. However, software-based scheduling might not fully leverage this parallelism, leading to underutilization of GPU resources.

- Some applications, especially in real-time graphics and virtual reality, are sensitive to latency. Traditional scheduling with software intervention can introduce delays that impact user experience.

- With the growth of multi-core CPUs and GPUs with a higher number of processing units, managing numerous tasks efficiently becomes challenging for traditional software scheduling methods.

What is Hardware-Accelerated GPU Scheduling?

Hardware-accelerated GPU scheduling refers to the utilization of dedicated hardware components within the Graphics Processing Unit (GPU) to manage the allocation and execution of tasks. Unlike traditional software-based scheduling, where the CPU and operating system manage task queues and context switches, hardware-accelerated scheduling relies on specialized hardware to streamline these operations.

- By executing scheduling-related tasks directly on the GPU hardware, overhead associated with communication between the CPU and GPU is reduced. This leads to quicker task transitions and context switches.

- Hardware components can manage task queues and execution in parallel with GPU processing, enabling more tasks to be scheduled and executed simultaneously.

- Specialized hardware can allocate processing units and memory more efficiently, optimizing resource usage and improving overall performance.

In May 2020, Microsoft released an update for Windows that introduced Hardware-Accelerated GPU Scheduling as an option for supported graphics cards and drivers. With this update, Windows has now the ability to offload the GPU Scheduling to a dedicated GPU Scheduling hardware.

While Windows still has control over the prioritization of tasks and decides which application has the priority for context switching, it offloads high-frequency tasks to the dedicated GPU Scheduler to handle context switching among various GPU engines.

Both NVIDIA and AMD (the two main graphics cards manufacturers) welcomed this move. They said that this new feature of moving scheduling jobs from software to hardware will improve the GPU performance, responsiveness and also reduce latency.

Requirements for Hardware-Accelerated GPU Scheduling

Implementing hardware-accelerated GPU scheduling involves several requirements to ensure its successful integration and operation within a computing system. These requirements encompass hardware, software, and compatibility considerations.

- Windows Version: Windows 10 version 2004 (May 2020 Update) or later is required to use the feature.

- GPU Compatibility: For NVIDIA Graphics Cards: A GPU from the 1000 series or later is needed. For AMD Graphics Cards: A GPU from the 5600 series or later is recommended.

- Display Drivers: Ensure that your graphics card’s display drivers (either from Nvidia or AMD) are up to date to ensure compatibility and optimal performance.

By meeting these requirements, users can take advantage of the Hardware Accelerated GPU Scheduling feature to enhance their system’s graphics performance, reduce latency, and improve overall responsiveness in applications and workloads that rely on the GPU.

How to Enable Hardware-Accelerated GPU Scheduling?

Enabling Hardware-Accelerated GPU Scheduling involves adjusting display settings on your Windows operating system. If you have a supporting Graphics Card with correct drivers and Windows update, the Hardware-Accelerated GPU Scheduling feature will be available as an option. By default, Hardware-Accelerated GPU Scheduling is disabled in both Windows 10 and Windows 11. But you can enable it from the settings. Here’s how you can enable Hardware-Accelerated GPU Scheduling on a compatible system running Windows 11:

Prerequisites

Make sure your Windows version is Windows 10 Version 2004 (May 2020 Update) or later. You can check your Windows version by typing “winver” in the Start menu search bar and pressing Enter. Verify that your GPU is from the supported series. For NVIDIA, it’s the 1000 series or later, and for AMD, it’s the 5600 series or later. Ensure that you have the latest graphics drivers installed for your GPU. You can download the latest drivers from the NVIDIA or AMD website.

Enable Hardware-Accelerated GPU Scheduling

To enable Hardware-Accelerated GPU Scheduling, follow these steps.

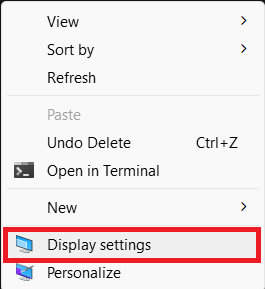

- Right-click on the desktop and select “Display settings.”

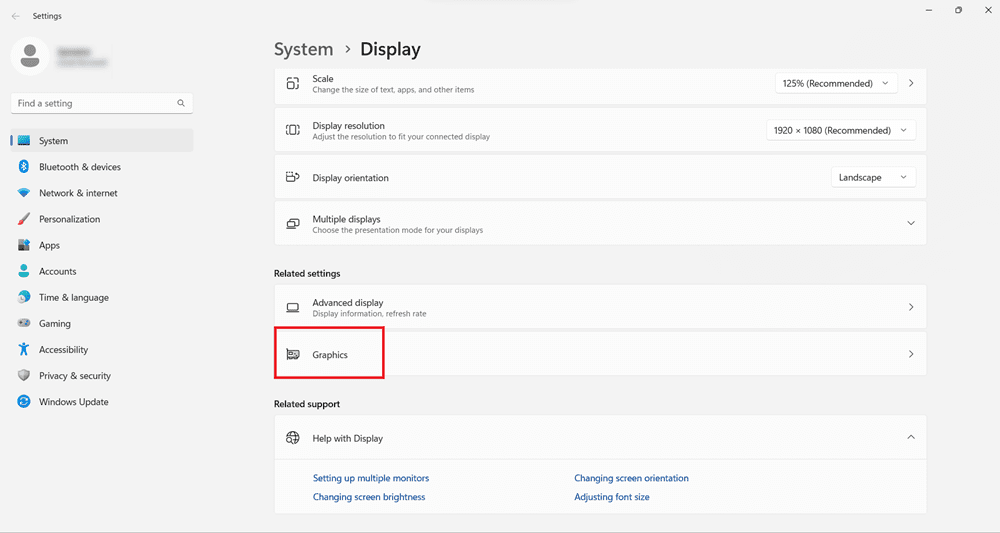

- Scroll down and click on “Graphics settings” or “Graphics adapter properties.”

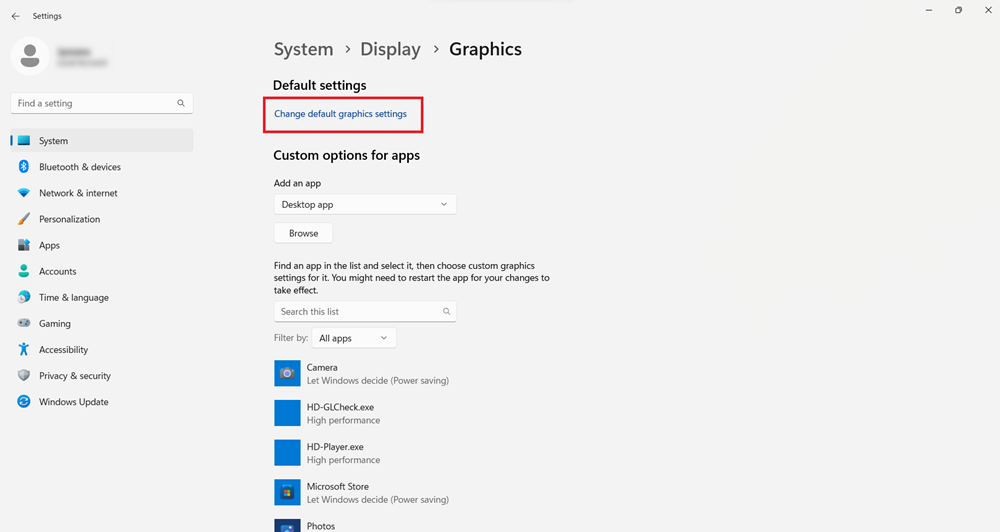

- In the Graphics settings or Adapter properties window, click the “Change default graphics settings” or “Advanced display settings” link.

- Click the “Hardware-Accelerated GPU Scheduling” toggle switch to turn it on.

After enabling Hardware-Accelerated GPU Scheduling, restart your computer to apply the changes.

Enable Hardware-Accelerated GPU Scheduling using Windows Registry

Before making any changes, create a backup of the Windows Registry to ensure you can restore it if needed.

- Press Windows key + R to open the Run dialog box.

- In the Run dialog, type “regedit” and press Enter.

- In the Registry Editor, navigate to the following path: Computer\HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\GraphicsDrivers

- On the right side, locate the “HwSchMode” key and double-click on it.

- Set the “Value data” to 2 and ensure that “Base” is set to “Hexadecimal.”

- After making changes to the Registry, restart your computer for the changes to take effect.

To disable the feature in the future, change the value of the “HwSchMode” key from 2 back to 1.

This is an alternative method to enable Hardware-Accelerated GPU Scheduling by directly modifying the Windows Registry. However, as with any Registry edits, users should exercise caution and follow the instructions carefully to avoid unintended consequences.

Should you Enable Hardware Accelerated GPU Scheduling?

For most Windows users, enabling Hardware Accelerated GPU Scheduling might not be necessary, but it can significantly benefit gamers. If your computer has a low or mid-tier CPU and experiences high CPU load in certain games, enabling Hardware Accelerated GPU Scheduling could be beneficial.

This feature offloads CPU tasks to the GPU, potentially alleviating strain on the CPU and improving overall performance. This setting may not improve the gaming experience for users with older CPUs and GPUs and might even worsen it.

Currently, only Windows computers running Windows 10 or newer and using reasonably new GPUs from Nvidia and AMD have access to this setting. While enabling Hardware-Accelerated GPU Scheduling can result in a small FPS gain (around 4 or 5 frames), the improvement might be less noticeable on newer hardware like the RTX 3060 (or higher tier graphics cards).

We encourage users to experiment with enabling the setting to see if it improves their system’s performance or not and keep it turned on, unless issues arise after enabling Hardware Accelerated GPU Scheduling. While extra frames in games might not be a guaranteed outcome, the reduction in CPU usage can contribute to smoother and more consistent performance. Microsoft suggests that major in-game differences may not be readily apparent, but the feature can have positive effects on CPU-related aspects. Despite the requirement for a restart, turning this setting on and off is relatively straightforward.

If the feature is not available for your system, there are alternative methods to enhance performance without upgrading hardware. For instance, you can disable frame buffering through in-game options or the GPU driver control panel. This can help maintain good visual quality on older hardware.

Conclusion

In the world of graphics processing, Hardware Accelerated GPU Scheduling stands as a notable stride towards optimizing resource allocation and elevating user experiences. It is a process of offloading the GPU-related scheduling tasks to a dedicated scheduler on the GPU rather than the CPU (or rather the operating system) taking care of it. When Microsoft first announced this feature, the whole gaming industry was extremely excited but the results and reviews were mixed (at least in the beginning).

In this guide, we saw how easy it is to enable (or disable) Hardware-Accelerated GPU Scheduling. You can experiment with this feature if you have the right GPU and driver combination and share your experience.

FAQs

What Are the Benefits of Enabling Hardware-Accelerated GPU Scheduling?

Answer: Enabling this feature can lead to reduced latency in graphics and compute tasks, improved overall system responsiveness, and better throughput for applications that rely on the GPU. It allows tasks to be managed more efficiently directly on the GPU, minimizing delays caused by CPU-GPU communication.

Is There a Risk in Enabling Hardware-Accelerated GPU Scheduling?

Answer: Enabling the feature itself shouldn’t pose a risk, as it is a supported functionality. However, as with any system changes, there’s a slight potential for compatibility issues or unexpected behavior. Always ensure you have a backup of your data and registry before making changes, and follow official instructions from trusted sources to minimize any risks.

Can I Enable Hardware-Accelerated GPU Scheduling on Laptops?

Answer: Yes, if your laptop’s GPU meets the compatibility requirements (NVIDIA 1000 series or later, AMD 5600 series or later) and your operating system version is compatible (Windows 10 version 2004 or later), you should be able to enable Hardware-Accelerated GPU Scheduling on laptops with dedicated GPUs.

Do All Games Benefit Equally from Hardware-Accelerated GPU Scheduling?

Answer: The benefit of Hardware-Accelerated GPU Scheduling can vary based on the workload. Games and applications that involve frequent context switches, real-time rendering, or heavy GPU utilization are likely to see the most significant improvements. Applications that are less GPU-intensive might show less noticeable effects.

Can I Disable Hardware-Accelerated GPU Scheduling After Enabling It?

Answer: Yes, you can disable Hardware-Accelerated GPU Scheduling by reversing the steps you took to enable it. For instance, you can toggle the “Hardware-Accelerated GPU Scheduling” switch in the graphics settings to off or if you used the Windows Registry to enable it, you can set the “HwSchMode” key value back to 1. Always restart your computer after making such changes for them to take effect.